From The Blog

-

ConnectWise Slash and Grab Flaw Once Again Shows the Value of Input Validation We talk to Huntress About its Impact

Written by Sean KalinichAlthough the news of the infamous ConnectWise flaw which allowed for the creation of admin accounts is a bit cold, it still is one that…Written on Tuesday, 19 March 2024 12:44 in Security Talk Read 706 times Read more...

-

Social Manipulation as a Service – When the Bots on Twitter get their Check marks

Written by Sean KalinichWhen I started DecryptedTech it was to counter all the crap marketing I saw from component makers. I wanted to prove people with a clean…Written on Monday, 04 March 2024 16:17 in Editorials Read 1585 times Read more...

-

To Release or not to Release a PoC or OST That is the Question

Written by Sean KalinichThere is (and always has been) a debate about the ethics and impact of the release of Proof-of-Concept Exploit for an identified vulnerability and Open-Source…Written on Monday, 26 February 2024 13:05 in Security Talk Read 1118 times Read more...

-

There was an Important Lesson Learned in the LockBit Takedown and it was Not About Threat Groups

Written by Sean KalinichIn what could be called a fantastic move, global law enforcement agencies attacked and took down LockBit’s infrastructure. The day of the event was filled…Written on Thursday, 22 February 2024 12:20 in Security Talk Read 1089 times Read more...

-

NetSPI’s Offensive Security Offering Leverages Subject Matter Experts to Enhance Pen Testing

Written by Sean KalinichBlack Hat 2023 Las Vegas. The term offensive security has always been an interesting one for me. On the surface is brings to mind reaching…Written on Tuesday, 12 September 2023 17:05 in Security Talk Read 2135 times Read more...

-

Black Kite Looks to Offer a Better View of Risk in a Rapidly Changing Threat Landscape

Written by Sean KalinichBlack Hat 2023 – Las Vegas. Risk is an interesting subject and has many different meanings to many different people. For the most part Risk…Written on Tuesday, 12 September 2023 14:56 in Security Talk Read 1861 times Read more...

-

Microsoft Finally Reveals how they Believe a Consumer Signing Key was Stollen

Written by Sean KalinichIn May of 2023 a few sensitive accounts reported to Microsoft that their environments appeared to be compromised. Due to the nature of these accounts,…Written on Thursday, 07 September 2023 14:40 in Security Talk Read 2134 times Read more...

-

Mandiant Releases a Detailed Look at the Campaign Targeting Barracuda Email Security Gateways, I Take a Look at What this all Might Mean

Written by Sean KalinichThe recent attack that leveraged a 0-Day vulnerability to compromise a number of Barracuda Email Security Gateway appliances (physical and virtual, but not cloud) was…Written on Wednesday, 30 August 2023 16:09 in Security Talk Read 2103 times Read more...

-

Threat Groups Return to Targeting Developers in Recent Software Supply Chain Attacks

Written by Sean KalinichThere is a topic of conversation that really needs to be talked about in the open. It is the danger of developer systems (personal and…Written on Wednesday, 30 August 2023 13:29 in Security Talk Read 1896 times Read more...

Recent Comments

- Sean, this is a fantastic review of a beautiful game. I do agree with you… Written by Jacob 2023-05-19 14:17:50 Jedi Survivor – The Quick, Dirty, and Limited Spoilers Review

- Great post. Very interesting read but is the reality we are currently facing. Written by JP 2023-05-03 02:33:53 The Dangers of AI; I Think I Have Seen this Movie Before

- I was wondering if you have tested the microphone audio frequency for the Asus HS-1000W? Written by Maciej 2020-12-18 14:09:33 Asus HS-1000W wireless headset impresses us in the lab

- Thanks for review. I appreciate hearing from a real pro as opposed to the blogger… Written by Keith 2019-06-18 04:22:36 The Red Hydrogen One, Possibly One of the Most “misunderstood” Phones Out

- Have yet to see the real impact but in the consumer segment, ryzen series are… Written by sushant 2018-12-23 10:12:12 AMD’s 11-year journey to relevance gets an epic finish.

Most Read

- Microsoft Fail - Start Button Back in Windows 8.1 But No Start Menu Written on Thursday, 30 May 2013 15:33 in News Be the first to comment! Read 116531 times Read more...

- We take a look at the NETGEAR ProSafe WNDAP360 Dual-Band Wireless Access Point Written on Saturday, 07 April 2012 00:17 in Pro Storage and Networking Be the first to comment! Read 87502 times Read more...

- Synology DS1512+ Five-Bay NAS Performance Review Written on Tuesday, 12 June 2012 20:31 in Pro Storage and Networking Be the first to comment! Read 82044 times Read more...

- Gigabyte G1.Sniper M3 Design And Feature Review Written on Sunday, 19 August 2012 22:35 in Enthusiast Motherboards Be the first to comment! Read 80342 times Read more...

- The Asus P8Z77-M Pro Brings Exceptional Performance and Value to the Lab Written on Monday, 23 April 2012 13:02 in Consumer Motherboards Be the first to comment! Read 71000 times Read more...

Displaying items by tag: Intel

Intel Investigating MSI Data Breach and Private Code Signing Key Theft

Yesterday we reported on a ransomware attack that impacted PC and component manufacturer MSI. When they, MSI, disclosed the attack they claimed there was no significant impact, but failed to consider that most, if not all, modern ransomware attacks also incorporate exfiltration techniques to ensure a ransom is paid. This this case, the group Money Message had exfiltrated data a claimed 1.5TB of data that included firmware, source code, and databases. This sounds a bit significant at this point.

Dell and others move to disable Intel's Management Engine

It seems that PC makers are not happy with the Intel’s Management Engine (IME) and the flaws that keep being found in it. The original flaw allowed attackers a clean way to compromise a system including uploading malware and exfiltrating data. This could be done in a way that bypassed most security systems and even allowed for tampering with the UEFI BIOS if the attacker was sophisticated enough. To their credit, Intel did warn people and manufacturers about this and patched it fairly quickly. The problem is, now that the cat is out of the bag about one flaw; there are sure to be more.

AMD’s 11-year journey to relevance gets an epic finish.

In the early 2000s AMD was on top of the world, they had a desktop processor that was what everyone wanted. AMD was handily beating Intel in terms of performance and pushing x86-64 computing out to the world. In 2006 AMD made an odd decision to buy GPU maker ATi for a rather hefty sum. This one act threw AMD off their game so badly that they operated in the red for many years after the purchase. However, over the last 2-3 years AMD has made some well-planned changes internally. These changes included dropping the mobile focus and creating the RTG (Radeon Technology Group). They have secured some technologies through purchases and cleaned up some financially impacting deals.

Microsoft finds active exploit of Intel's AMT vulnerability

Remote management and access tools are great things for IT staff to use, but if they are not set up correctly or they have bugs hidden in the code they can quickly become a nightmare. Intel’s AMT (Active Management Technology) suite of tools recently was found to have a rather nasty little surprise hidden in them. It seems that a flaw in the way their SOL (Serial on LAN) tool runs combined with the way Windows deals with AMT allowed attackers to use AMT to deploy malware and to exfiltrate data from a compromised system.

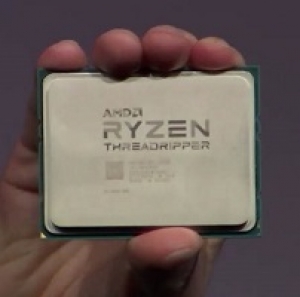

Did Intel Repond to Threadripper, or just to Ryzen in General?

Computex 2017 is done, the hangovers are pretty much gone, and what do we have to show for it? Well… we have a new fight for fanboys and review sites alike to talk about. This is the fight between AMD’s Threadripper and Intel’s New X series CPUs. The crux of the argument is that Intel’s 18 Core i9 with 44 PCIe lanes is a reactionary move to a leak of Threadripper’s specifications.

AMD Drops the Ryzen based Threadripper CPU on Computex

Earlier today, we talked about Intel’s response to AMD’s Ryzen success so we thought we would give some love to AMD as well. Although we are not out at Computex (again) we are still getting news from different manufacturers. We are also getting information from a few people that are in the sweltering heat…. Oh yeah; back to talking about AMD’s response to Intel’s Core i9 X-series.

Intel Launches the new X-Series at Computex 2017

With Computex going on there has already been lots of news hitting the street about new PC gear. Everything from GPUs, Laptops, Cases, overclocking world records, you know the stuff. We have also heard that Intel has kicked a new series of CPUs out the door. These are their “X” series of CPUs and are pretty much a direct response to the performance that AMD’s Ryzen has shown off.

AMD shares up after licensing moves and Radeon success

It seems that AMD’s recent licensing moves and the press that Zen has been getting has given investors more confidence in the company. On Friday this confidence pushed AMD’s share price by almost 10% at $6.18 (the 52 week high) of this writing AMD’s share price has dropped some, but is still up by a little more than 5% ($6.14). Some have seen this as proof that AMD is going to have a comeback soon and that Intel should be very worried.

Bendgate: it’s not just for iPhones anymore.

Not all that long ago someone found out that Apple’s iPhone was not all that strong and could be damaged with little pressure. This issue became known as Bendgate… the use of the word gate still following us from the days of Watergate when Richard Nixon was the president. However, there now seems to be another item that could be dropped into the Bendgate fiasco. This is some of Intel’s Skylake CPUs.

Intel Launches new Broadwell CPUs with Iris Pro at Computex

At Computex 2015 Intel has announced a few nice additions to the Broadwell line up which bring Iris Pro graphics to the table. The new CPUs are touted as the first LGA CPUs to have Iris Pro in them which might not seem like a big deal, but if leveraged right could have a significant impact on the market. Intel is also pushing out mobile Core i5 CPUs with Iris Pro 6200 with this launch making their more advanced graphics available to a broader range of products.