From The Blog

-

ConnectWise Slash and Grab Flaw Once Again Shows the Value of Input Validation We talk to Huntress About its Impact

Written by Sean KalinichAlthough the news of the infamous ConnectWise flaw which allowed for the creation of admin accounts is a bit cold, it still is one that…Written on Tuesday, 19 March 2024 12:44 in Security Talk Read 689 times Read more...

-

Social Manipulation as a Service – When the Bots on Twitter get their Check marks

Written by Sean KalinichWhen I started DecryptedTech it was to counter all the crap marketing I saw from component makers. I wanted to prove people with a clean…Written on Monday, 04 March 2024 16:17 in Editorials Read 1569 times Read more...

-

To Release or not to Release a PoC or OST That is the Question

Written by Sean KalinichThere is (and always has been) a debate about the ethics and impact of the release of Proof-of-Concept Exploit for an identified vulnerability and Open-Source…Written on Monday, 26 February 2024 13:05 in Security Talk Read 1103 times Read more...

-

There was an Important Lesson Learned in the LockBit Takedown and it was Not About Threat Groups

Written by Sean KalinichIn what could be called a fantastic move, global law enforcement agencies attacked and took down LockBit’s infrastructure. The day of the event was filled…Written on Thursday, 22 February 2024 12:20 in Security Talk Read 1074 times Read more...

-

NetSPI’s Offensive Security Offering Leverages Subject Matter Experts to Enhance Pen Testing

Written by Sean KalinichBlack Hat 2023 Las Vegas. The term offensive security has always been an interesting one for me. On the surface is brings to mind reaching…Written on Tuesday, 12 September 2023 17:05 in Security Talk Read 2123 times Read more...

-

Black Kite Looks to Offer a Better View of Risk in a Rapidly Changing Threat Landscape

Written by Sean KalinichBlack Hat 2023 – Las Vegas. Risk is an interesting subject and has many different meanings to many different people. For the most part Risk…Written on Tuesday, 12 September 2023 14:56 in Security Talk Read 1842 times Read more...

-

Microsoft Finally Reveals how they Believe a Consumer Signing Key was Stollen

Written by Sean KalinichIn May of 2023 a few sensitive accounts reported to Microsoft that their environments appeared to be compromised. Due to the nature of these accounts,…Written on Thursday, 07 September 2023 14:40 in Security Talk Read 2114 times Read more...

-

Mandiant Releases a Detailed Look at the Campaign Targeting Barracuda Email Security Gateways, I Take a Look at What this all Might Mean

Written by Sean KalinichThe recent attack that leveraged a 0-Day vulnerability to compromise a number of Barracuda Email Security Gateway appliances (physical and virtual, but not cloud) was…Written on Wednesday, 30 August 2023 16:09 in Security Talk Read 2091 times Read more...

-

Threat Groups Return to Targeting Developers in Recent Software Supply Chain Attacks

Written by Sean KalinichThere is a topic of conversation that really needs to be talked about in the open. It is the danger of developer systems (personal and…Written on Wednesday, 30 August 2023 13:29 in Security Talk Read 1879 times Read more...

Recent Comments

- Sean, this is a fantastic review of a beautiful game. I do agree with you… Written by Jacob 2023-05-19 14:17:50 Jedi Survivor – The Quick, Dirty, and Limited Spoilers Review

- Great post. Very interesting read but is the reality we are currently facing. Written by JP 2023-05-03 02:33:53 The Dangers of AI; I Think I Have Seen this Movie Before

- I was wondering if you have tested the microphone audio frequency for the Asus HS-1000W? Written by Maciej 2020-12-18 14:09:33 Asus HS-1000W wireless headset impresses us in the lab

- Thanks for review. I appreciate hearing from a real pro as opposed to the blogger… Written by Keith 2019-06-18 04:22:36 The Red Hydrogen One, Possibly One of the Most “misunderstood” Phones Out

- Have yet to see the real impact but in the consumer segment, ryzen series are… Written by sushant 2018-12-23 10:12:12 AMD’s 11-year journey to relevance gets an epic finish.

Most Read

- Microsoft Fail - Start Button Back in Windows 8.1 But No Start Menu Written on Thursday, 30 May 2013 15:33 in News Be the first to comment! Read 116518 times Read more...

- We take a look at the NETGEAR ProSafe WNDAP360 Dual-Band Wireless Access Point Written on Saturday, 07 April 2012 00:17 in Pro Storage and Networking Be the first to comment! Read 87451 times Read more...

- Synology DS1512+ Five-Bay NAS Performance Review Written on Tuesday, 12 June 2012 20:31 in Pro Storage and Networking Be the first to comment! Read 82009 times Read more...

- Gigabyte G1.Sniper M3 Design And Feature Review Written on Sunday, 19 August 2012 22:35 in Enthusiast Motherboards Be the first to comment! Read 80320 times Read more...

- The Asus P8Z77-M Pro Brings Exceptional Performance and Value to the Lab Written on Monday, 23 April 2012 13:02 in Consumer Motherboards Be the first to comment! Read 70967 times Read more...

Displaying items by tag: Xen

Microsoft Takes Aim At VMware Again, But Do They Even Have The Right Weapons?

|

In Microsoft news of another, albeit similar, nature to what we have been seeing with Windows 8 and Surface it looks like Steve Ballmer wants to reignite the war between Microsoft and VMware. Ever since the launch of Windows 2008 Microsoft has tried to realize its vision of maintaining a data center eco system. They were more than slightly put out when VMware entered the scene and started pushing their new virtualization technology (including the hyper visor) around the market back in 1999. VMware’s continued success in 2001-2003 was something of a thorn in Microsoft’s side. It was this that led Microsoft to buy Virtual PC and Virtual Server from Connectix in early 2003.

US CERT Finds a Flaw in Some 64-Bit Virtualization Host Software Running on Intel CPUs

There is a new security warning for some people running virtualized systems on Intel CPUs. According to researchers at US CERT (Computer Emergency Readiness Team) the issue exists with some 64-bit operating systems when running on a hyper visor style host machine (also if the host OS is 64-bit). The vulnerability includes a method for escalation of privileges and a potential guest to host escape.

There is a new security warning for some people running virtualized systems on Intel CPUs. According to researchers at US CERT (Computer Emergency Readiness Team) the issue exists with some 64-bit operating systems when running on a hyper visor style host machine (also if the host OS is 64-bit). The vulnerability includes a method for escalation of privileges and a potential guest to host escape.

DecryptedTech's new Enterprise Testing Lab

DecryptedTech is now moving into Enterprise class testing. To accomplish this we have built a small Enterprise class network in our lab complete with two iSCSI SANs , TWO NAS Devices, multiple Gigabit Switches, and two ESX Hosts with Multiple VMs to keep things interesting. We will begin testing Enterprise class hardware and Software. We will be looking at these products with an eye on how the technology differs from the average consumer class products as well as how this technology will benefit the consumer as it trickles down to their market space. We do have our first product in the lab right now, but before we kick that off let’s talk about the new DecryptedTech Enterprise class Lab in detail.

DecryptedTech is now moving into Enterprise class testing. To accomplish this we have built a small Enterprise class network in our lab complete with two iSCSI SANs , TWO NAS Devices, multiple Gigabit Switches, and two ESX Hosts with Multiple VMs to keep things interesting. We will begin testing Enterprise class hardware and Software. We will be looking at these products with an eye on how the technology differs from the average consumer class products as well as how this technology will benefit the consumer as it trickles down to their market space. We do have our first product in the lab right now, but before we kick that off let’s talk about the new DecryptedTech Enterprise class Lab in detail.

The Switches -

The backbone of our lab consists of five Gigabit Switches. Two of these are from TRENDNet TEG-160WS and the TEG-240WS. Both of these are Web Smart Managed switches and have 2GB trunks setup between the two for faster switching between them. Next we have a TRENDNet TPE-80WS POE (Power over Ethernet) 8 Port Gigabit switch which offers quite a bit more controls than the TEG line and is our master switch for the RSTP (Rapid Spanning Tree Protocol ) topology ion place. Our second vendor in the lab is NETGEAR, they have provided us with their ProSafe GS110TP POE 10 port Gigabit Switch (two of these ports are fiber uplink) and a GS108T 8 Port Gigabit Switch. As we mentioned the switches are part of an RSTP topology and each one has different components attached to ensure that the loads is distributed across the network backbone.

The Storage -

Our Lab has three NAS devices one of which is fully iSCSI capable (and works with VMWare) the two non-iSCSI NAS devices are the Seagate Black Armour 440 and a Thecus 5200 Pro. The Thecus 5200 Pro has 3TB of space and serves as an indirect file server while the BA-440 has 4TB and acts as a media storage server and backup target. The last NAS on the list is a Synology DS 201, this has a full 1TB of space and holds image files used for deployment of VMs and the installation of software into the virtual environment.

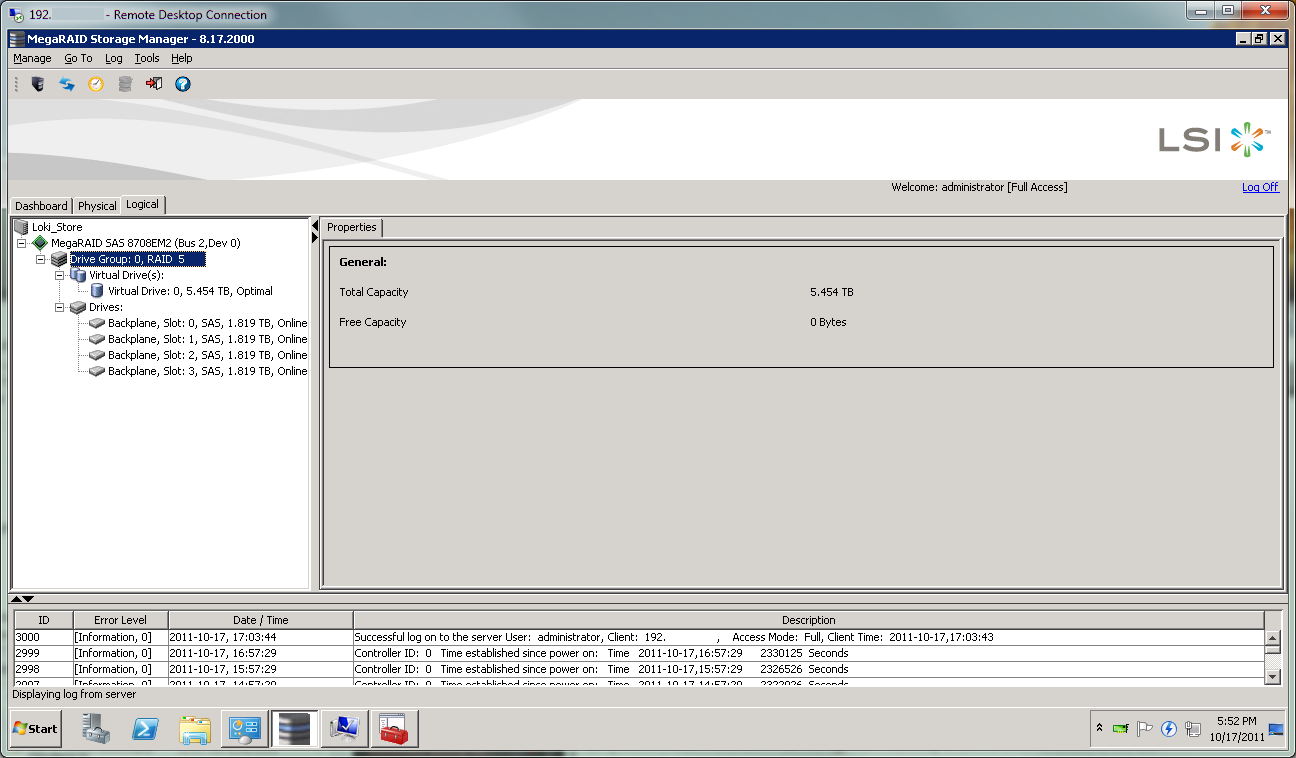

The last storage box we are rather proud to have. It is a custom built NAS/SAN with an AMD Phenom II x4 910e 4GB of memory on the Minix 890GX MiniITX motherboard and a 250GB OS Drive. For the OS we dropped in Windows 2008 R2 Storage Server. Of course that is not the thing that we are most proud of. For the actual storage we went with 4 Seagate 2TB Constellation ES Nearline SAS 2.0 drives (ST32000444SS) running in RAID 5 on an LSI MegaRAID SAS 8708EM2 SAS 6GB/s PCIe controller. It is this device with its two teamed NICs that provides the central iSCSI based storage for our VMWare cluster.

The VMWare Cluster -

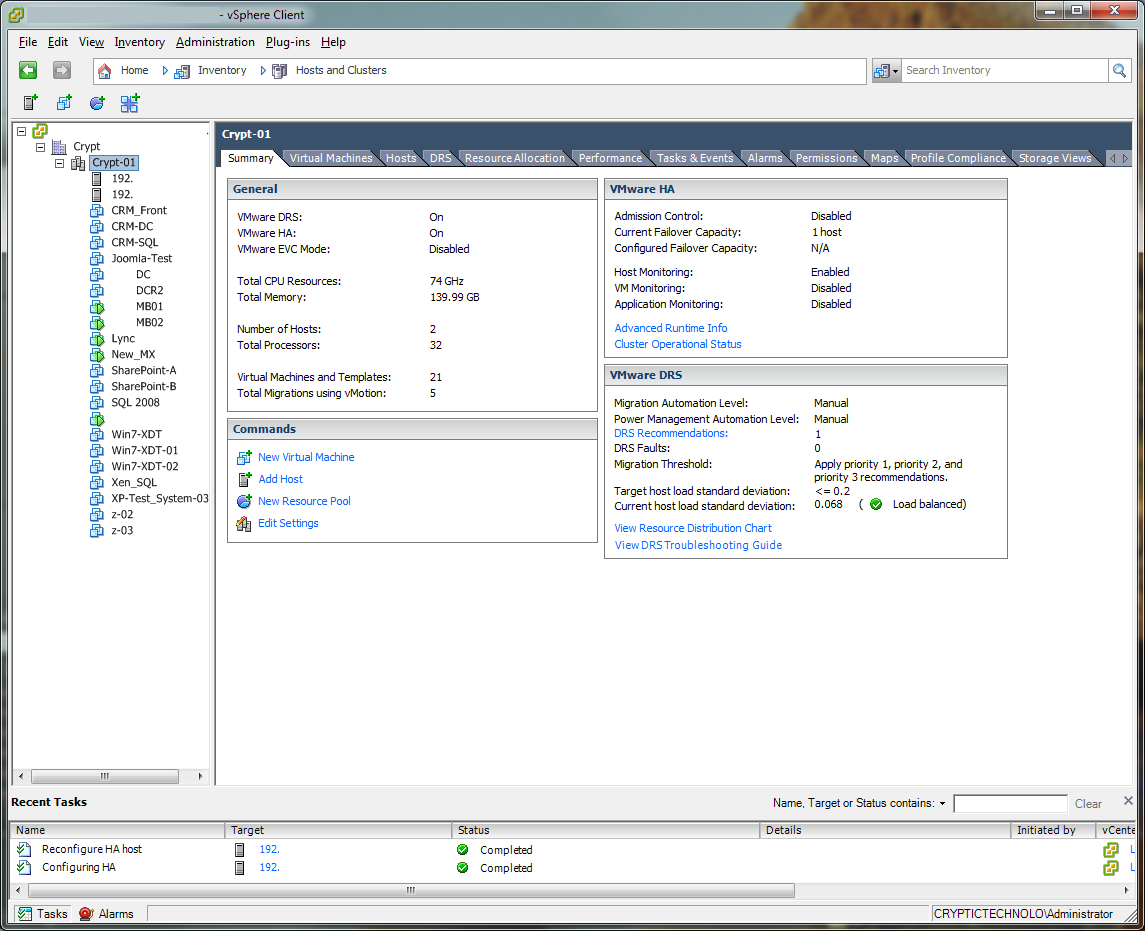

To make sure that we covered all of our bases we built two VMware ESX Hosts for a single cluster; one of them with Intel Xeons and the other featuring AMD Magny Cours CPUs. Both of these systems have Kingston Server Premier Memory installed (128GB between the two systems). The motherboards in each are from Asus and represent the mid-range of their server line up.

The Intel System specs are as follows;

2x Intel Xeon L5530 2.4GHz CPUs

48GB of Kingston Server Premier RAM (6 x8GB)

2x Kingston SSD Now 128GB drives in RAID 1 (for the ESX Host Software)

Asus Z8NA-D6 motherboard

Cooler Master UCP 1100 Power Supply

The AMD half of the Cluster looks like this

2x AMD Opteron 6176 SE CPUs (12 Cores each for 24 physical cores)

92GB of memory (80GB Kingston Server Premier 10 x 8GB and 12GB Kingston Value Select Server memory 6 x 2GB)

2 x Seagate 500 GB Savio II SAS 2.0 Drives in RAID 1

Asus KGPE-D16 Motherboard

Cooler Master UCP 1100 Power Supply

The cluster is running VMMware ESX 4.1 (moving to 5.0 soon) and currently hosts 30 Virtual Machines all stored on our Custom Built NAS/SAN. Not all of these systems are powered on 24/7 (my power bill would be outrageous) but they are all on and operational when we have hardware in the lab that needs testing. Under normal conditions about 7 servers are live. These include an exchange cluster (Database Availability Group), a SQL server and a virtualized domain controller. Some of the other servers that run when under testing conditions are, two additional SQL servers (SharePoint and CRM) a two node SharePoint farm, a Xen Desktop test setup with three desktops, a webserver with a full copy of DecryptedTech on it) and virtualized Windows 2008 R2 domain controller. We feel this should be able to simulate the load of a fairly average business network.

In addition to the virtual systems there is a standalone Domain Controller (Windows 2008 R2) and a complete Microsoft Forefront Treat Management Gateway to control external access to the test environment.

In all the testing lab has taken a giant leap forward and we hope to be able to bring you some in-depth reviews of hardware and software that while outside the average consumer range will give you a glimpse of what will be coming down the road for the consumer market in the not so distant future.

Discuss this in our Forum