Displaying items by tag: Text to Speech

The Jabra Eclipse Bluetooth headset sounds off in the lab

The market for hands-free devices is growing very quickly. This growth has been driven by multiple factors including legal ones. In some states they are required for driving which has been pushing the market forward. Sadly, the market has not really moved forward over the last few years. The devices have gotten smaller, but the feature set and battery life has not changed much. Jabra has been working on changing that over the last year and have come out with some great new hands-free kits including the Jabra Eclipse which is what we are taking a look at today.

We Look at Siri's Evil Twin, Iris for Android

Taking their cues from Apple’s Siri a group of developers came up with a natural speech recognition algorithm similar to Siri in 8 hours. The difference is that this one is for Android. The new app (that is available as an alpha release in the Android Market) is called Iris and for an 8-hout project is very functional. We were rather impressed after we had a few hours to tinker with it.

Taking their cues from Apple’s Siri a group of developers came up with a natural speech recognition algorithm similar to Siri in 8 hours. The difference is that this one is for Android. The new app (that is available as an alpha release in the Android Market) is called Iris and for an 8-hout project is very functional. We were rather impressed after we had a few hours to tinker with it.

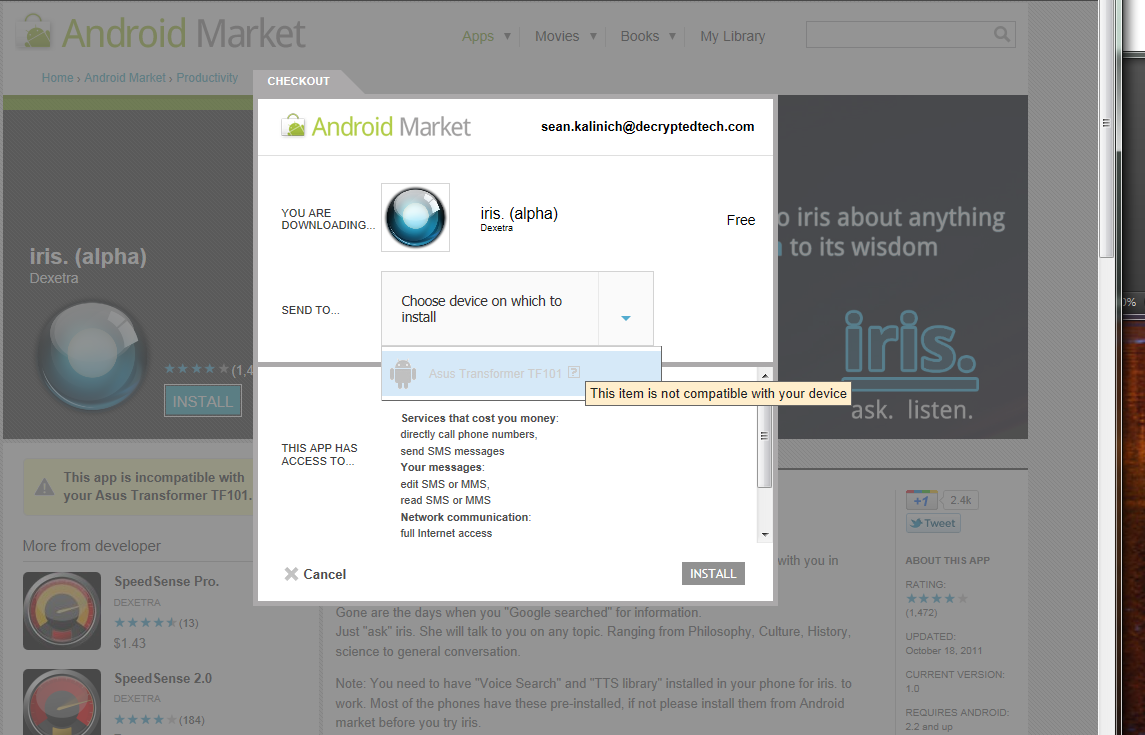

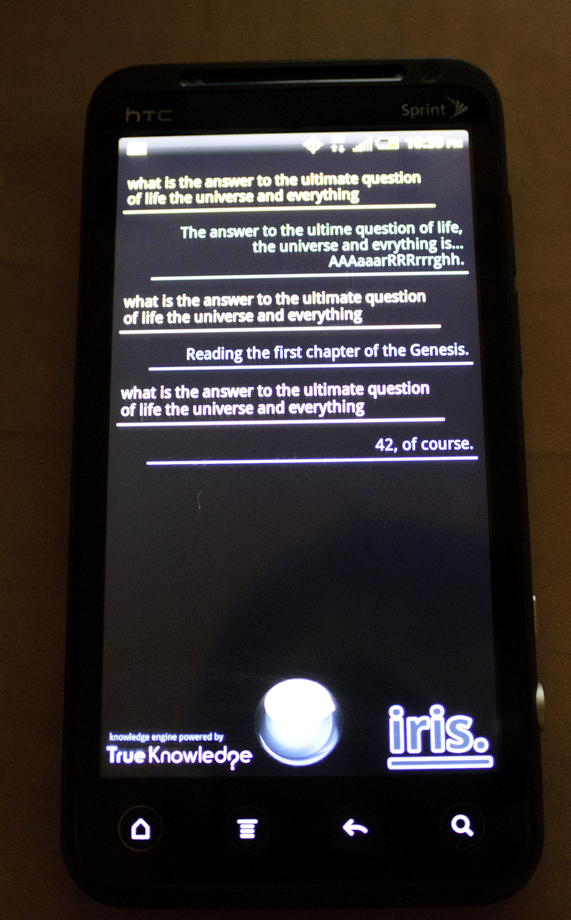

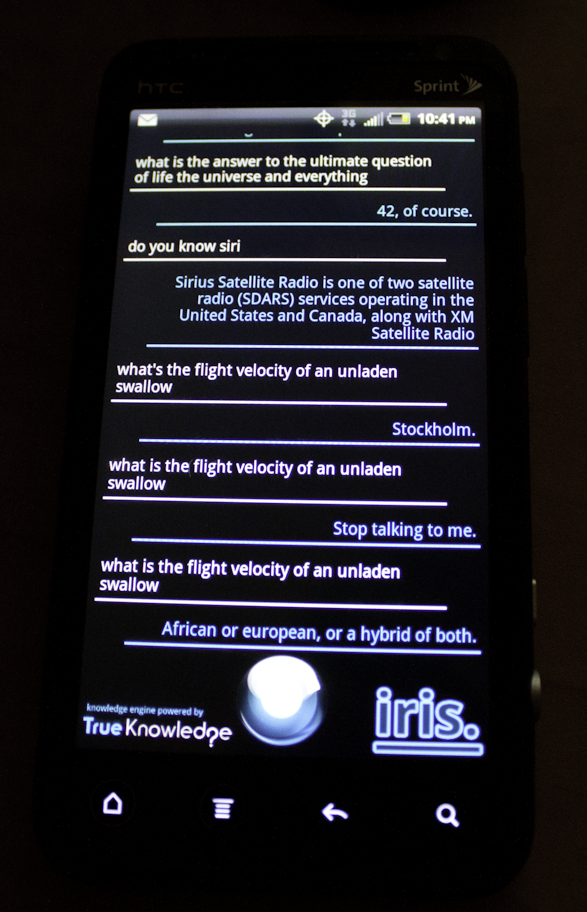

After we first heard that someone had done this we immediately went to the Market to see if we could get it there. The first thing that we noticed though was that while we could grab this for our phone, there was nothing visible in the Market on our Transformer tablet. In fact a quick run to the Market on our desktop PC shows us that Iris is not compatible with our Transformer. The other thing that we noticed is that you have a grab a dependency application called Speech Synthesis. This is what takes the text based responses and turns them into speech for you to enjoy. One of the first questions we put to Iris was the big one… What is the Answer to the Ultimate Question of Life, the Universe and Everything! It took three tries but we got the answer we were looking for. However some of the more mundane ones like “What is the forecast for the weather near Orlando” it had some problems with. I think my favorite answer for that question was “Beyond your Ability to comprehend”.

One of the first questions we put to Iris was the big one… What is the Answer to the Ultimate Question of Life, the Universe and Everything! It took three tries but we got the answer we were looking for. However some of the more mundane ones like “What is the forecast for the weather near Orlando” it had some problems with. I think my favorite answer for that question was “Beyond your Ability to comprehend”.

|

|

Now, I know this is nowhere near as polished or complete as Siri is on the iPhone, but what I did like was how accurate the device was at picking up what I was saying. Even when using contractions like what’s or can’t it knew what I was looking for. I was also able to differentiate between declarations and questions.

We are still playing around with this very interesting software, but we have to say what if this is what the group at Dexetra can do in only 8-hours… Apple should be worried when they put some real time and effort into it.

Discuss in our Forum