From The Blog

-

ConnectWise Slash and Grab Flaw Once Again Shows the Value of Input Validation We talk to Huntress About its Impact

Written by Sean KalinichAlthough the news of the infamous ConnectWise flaw which allowed for the creation of admin accounts is a bit cold, it still is one that…Written on Tuesday, 19 March 2024 12:44 in Security Talk Read 715 times Read more...

-

Social Manipulation as a Service – When the Bots on Twitter get their Check marks

Written by Sean KalinichWhen I started DecryptedTech it was to counter all the crap marketing I saw from component makers. I wanted to prove people with a clean…Written on Monday, 04 March 2024 16:17 in Editorials Read 1589 times Read more...

-

To Release or not to Release a PoC or OST That is the Question

Written by Sean KalinichThere is (and always has been) a debate about the ethics and impact of the release of Proof-of-Concept Exploit for an identified vulnerability and Open-Source…Written on Monday, 26 February 2024 13:05 in Security Talk Read 1124 times Read more...

-

There was an Important Lesson Learned in the LockBit Takedown and it was Not About Threat Groups

Written by Sean KalinichIn what could be called a fantastic move, global law enforcement agencies attacked and took down LockBit’s infrastructure. The day of the event was filled…Written on Thursday, 22 February 2024 12:20 in Security Talk Read 1100 times Read more...

-

NetSPI’s Offensive Security Offering Leverages Subject Matter Experts to Enhance Pen Testing

Written by Sean KalinichBlack Hat 2023 Las Vegas. The term offensive security has always been an interesting one for me. On the surface is brings to mind reaching…Written on Tuesday, 12 September 2023 17:05 in Security Talk Read 2143 times Read more...

-

Black Kite Looks to Offer a Better View of Risk in a Rapidly Changing Threat Landscape

Written by Sean KalinichBlack Hat 2023 – Las Vegas. Risk is an interesting subject and has many different meanings to many different people. For the most part Risk…Written on Tuesday, 12 September 2023 14:56 in Security Talk Read 1869 times Read more...

-

Microsoft Finally Reveals how they Believe a Consumer Signing Key was Stollen

Written by Sean KalinichIn May of 2023 a few sensitive accounts reported to Microsoft that their environments appeared to be compromised. Due to the nature of these accounts,…Written on Thursday, 07 September 2023 14:40 in Security Talk Read 2139 times Read more...

-

Mandiant Releases a Detailed Look at the Campaign Targeting Barracuda Email Security Gateways, I Take a Look at What this all Might Mean

Written by Sean KalinichThe recent attack that leveraged a 0-Day vulnerability to compromise a number of Barracuda Email Security Gateway appliances (physical and virtual, but not cloud) was…Written on Wednesday, 30 August 2023 16:09 in Security Talk Read 2109 times Read more...

-

Threat Groups Return to Targeting Developers in Recent Software Supply Chain Attacks

Written by Sean KalinichThere is a topic of conversation that really needs to be talked about in the open. It is the danger of developer systems (personal and…Written on Wednesday, 30 August 2023 13:29 in Security Talk Read 1903 times Read more...

Recent Comments

- Sean, this is a fantastic review of a beautiful game. I do agree with you… Written by Jacob 2023-05-19 14:17:50 Jedi Survivor – The Quick, Dirty, and Limited Spoilers Review

- Great post. Very interesting read but is the reality we are currently facing. Written by JP 2023-05-03 02:33:53 The Dangers of AI; I Think I Have Seen this Movie Before

- I was wondering if you have tested the microphone audio frequency for the Asus HS-1000W? Written by Maciej 2020-12-18 14:09:33 Asus HS-1000W wireless headset impresses us in the lab

- Thanks for review. I appreciate hearing from a real pro as opposed to the blogger… Written by Keith 2019-06-18 04:22:36 The Red Hydrogen One, Possibly One of the Most “misunderstood” Phones Out

- Have yet to see the real impact but in the consumer segment, ryzen series are… Written by sushant 2018-12-23 10:12:12 AMD’s 11-year journey to relevance gets an epic finish.

Most Read

- Microsoft Fail - Start Button Back in Windows 8.1 But No Start Menu Written on Thursday, 30 May 2013 15:33 in News Be the first to comment! Read 116533 times Read more...

- We take a look at the NETGEAR ProSafe WNDAP360 Dual-Band Wireless Access Point Written on Saturday, 07 April 2012 00:17 in Pro Storage and Networking Be the first to comment! Read 87518 times Read more...

- Synology DS1512+ Five-Bay NAS Performance Review Written on Tuesday, 12 June 2012 20:31 in Pro Storage and Networking Be the first to comment! Read 82058 times Read more...

- Gigabyte G1.Sniper M3 Design And Feature Review Written on Sunday, 19 August 2012 22:35 in Enthusiast Motherboards Be the first to comment! Read 80344 times Read more...

- The Asus P8Z77-M Pro Brings Exceptional Performance and Value to the Lab Written on Monday, 23 April 2012 13:02 in Consumer Motherboards Be the first to comment! Read 71007 times Read more...

Displaying items by tag: nVidia

Lapsus$ Claims They have Some Microsoft Azure Source Code, Microsoft is Investigating the Claim

The Lapsus$ group has been in the news recently for theft of source code form some high-profile targets. These targets have included companies like NVIDIA, Samsung, Vodafone, and Ubisoft. The NVIDIA event was noteworthy as it included a claim that NVIDIA hacked the attackers back in order to encrypt the data that have been taken out of their environment.

Samsung Confirms Breach and Theft of Source Code

Earlier today we reported that the same group that hit NVIDIA and stole source code along with employee logins also hit Samsung and stole around 190GB of source code data related to how galaxy mobile devices operate. The data, according to the Lapsus$ group, covers the bootloader for the trust zone and trusted apps, how galaxy devices encrypt data and other code operating fundamentals.

Samsung Might be the Next Victim of the Same Group that Hacked NVIDIA

The Lapsus$ group, the same ones that broke into NVIDIA and Stole corporate data and had their attack VM encrypted, appear to have also broken into Samsung. Lapsus$ has leaked what they claim to be source code for several sensitive applications include apps that run in the Trust Zone on Samsung Mobile Devices.

Nvidia-ARM Deall off Citing Regulatory Challenges

In September 2020 Nvidia announced that it was in talks to acquire ARM Holdings from SoftBank Group Corp. The deal was not surprising, but it did send waves through the industry. The concerns around this deal were and are the same as the ones currently surrounding the Microsoft-Activision deal. Given the level of competition in the industry, would Nvidia use its new purchase to create roadblocks for their competition? Nvidia has always maintained that they would never do anything like this, but their assurances were never enough to get past regulators.

Is HBM a viable technology for GPUs? Yes, Yes it is… just not right now

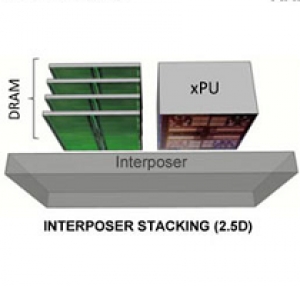

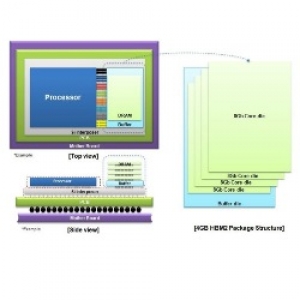

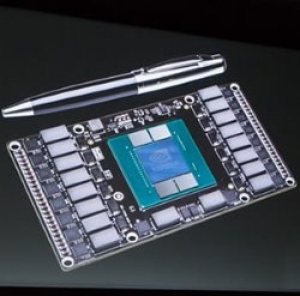

Over the last couple of days, we have received information that would indicate nVidia is not moving to HBM 2 for their consumer GPUs (outside of some extremely high-end models). Instead, they appear to be focusing on improvements found in GDDR5X and GDDR6. Conversely, AMD appears to be focusing on HBM for many of their high-end and even some mid-range cards. The two very different paths has sparked something of a debate amongst fans of both products (as you can imagine). The questions are, why chose one over the other at this point and is HBM a truly viable option for AMD?

NVIDIA could be testing two different models of Pascal for an April Launch

The experts have all weighed in. 2016 will be the year of Virtual Reality. The problem is that the experts are very often wrong. Still that has not stopped multiple companies from pushing out new VR headsets, APIs, development kits and more. The craze has gone so far as to start effecting the way that companies are making core hardware. We already know that AMD is pushing for VR mastery with new products and by showing which existing products also have a level of VR support.

Is Virtual Reality really the next IT technology?

It is said that nature abhors a vacuum and that is certainly true. Something will come along to fill the void if we let nature take its course. Unfortunately this law is a little mutated in the consumer electronics market and especially in the PC component world. Here is reads; the market cannot stand not having an “It” technology, so we much create one. It seems that the last few years we have been watching this happen.

The race to HBM2 for the future VR GPU performance crown

On the 19th of January Samsung announced that they had begun mass production of their 4GB HBM 2.0 3D memory. This announcement was the starting gun for the next big GPU race. As we know both AMD and NVIDIA are racing to get viable products to the market in time for Oculus and HTC to launch their consumer version VR headsets. Up until now we have really only seen the developers’ kits and while these have been impressive they are not what most are hoping for in the final product.

Rumors say NVIDIA might launch Pascal in April in time with Oculus VR headsets

Yesterday we talked about the possibility that AMD will launch a Dual-GPU R9 Fury X card geared for 4k and VR. This is certainly welcome news for most AMD fans and for fans of virtual reality. It was no coincidence that the first time we are seeing this in operation was at a big VR event in LA or that the launch is rumored to coincide with the launch of Oculus and HTC’s Vive headsets. This move would be a very high-end AMD card on the market around April/May of this year.

Things just got interesting in the NVIDIA V Samsung Patent Battle

Remember that little patent squabble that NVIDIA and Samsung got into last year? Well some things have happened and they are not all that good for NVIDIA. If you have already forgotten about this incident (we do not blame you) we will fill you in. NVIDIA decided to file a complaint with the ITC against Samsung and Qualcomm. The claim was that Samsung was using technology that violated patents that they owned (programmable shaders, parallel processing etc.). NVIDIA also filed a patent law suit at the same time.